TL;DR:

Build agents by composing small, well-instrumented capabilities, enforcing runtime controls, and designing for predictable state transitions. These patterns reduce surprises in production and make agents auditable, debuggable, and cost-effective.

Intro

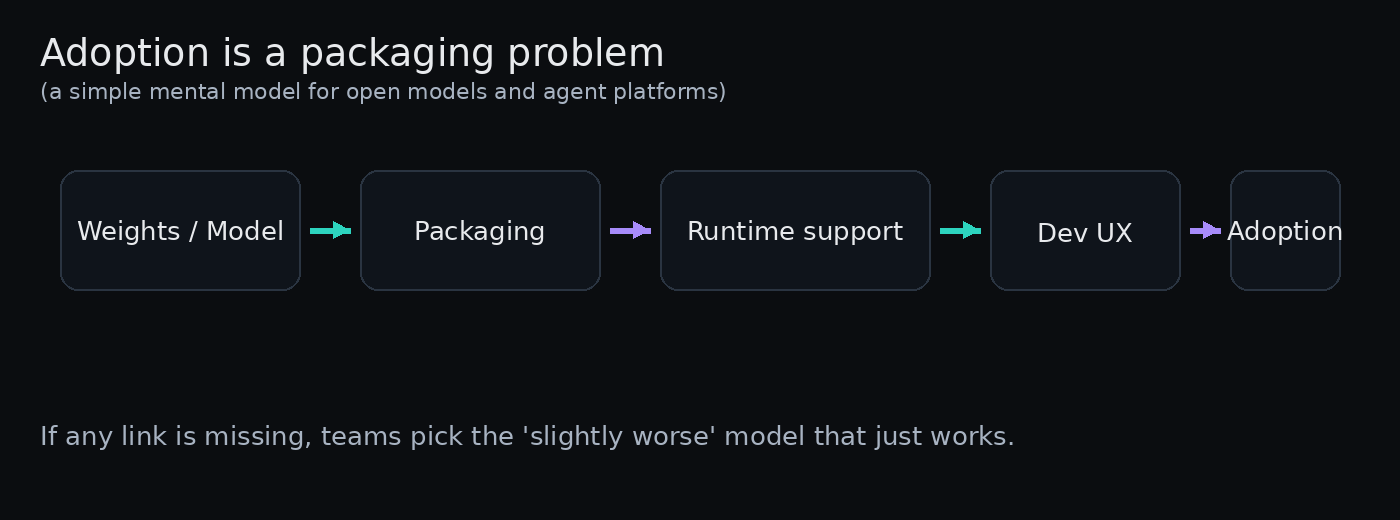

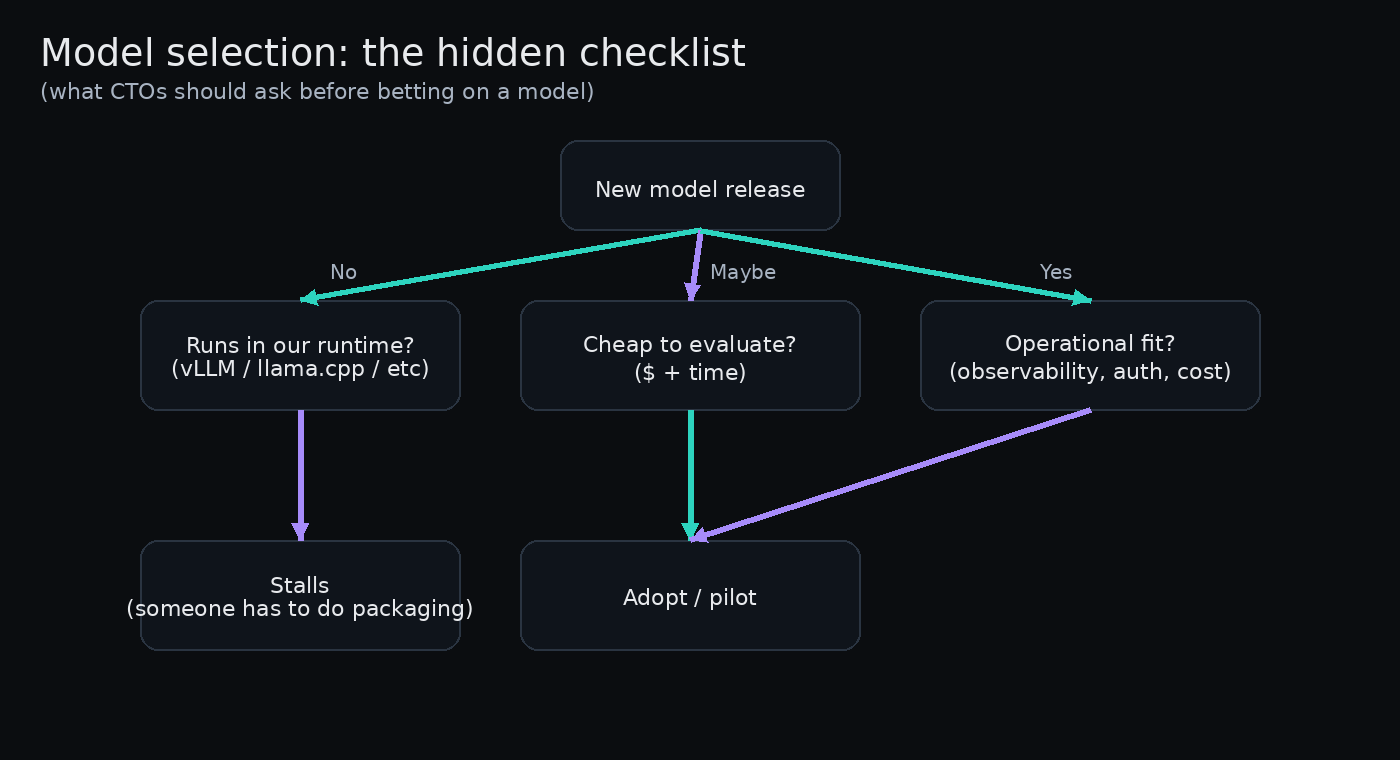

CTOs evaluating agent deployments quickly run into two recurring realities: agents are powerful automation that can dramatically reduce manual toil, and they are a new class of system with emergent failure modes. Successful production agents aren’t just “smart” — they are engineered with patterns that make them observable, bounded, and interoperable with existing operational practices. This article distills pragmatic design patterns I’ve seen work across cloud orchestration, customer service automation, and payment platforms, and shows how those patterns translate into measurable operational improvements.

Design for composable, bounded capabilities

Start by decomposing an agent into narrowly-scoped capabilities (skills, workers, or modules). Each capability exposes a small, well-documented interface: inputs, outputs, preconditions, expected side effects, and idempotency properties. This sounds obvious, but teams often hand agents unrestricted access to resources and then wonder why debugging is impossible.

Key design choices here are explicit schemas and idempotent actions. Use typed APIs or JSON schemas for every action the agent can request. Require an explicit “dry-run” flag for potentially destructive operations so you can validate plans before execution. Enforce idempotency by design: if an agent retries “create-user,” the back-end responds with a stable result rather than creating duplicates.

Benefits: smaller blast radius, simpler testing, and easier role-based permissions. In practice, teams replacing monolithic agents with composable capabilities saw mean time to resolution for agent-induced incidents fall by 30–60% because root causes were isolated to a single module.

Operational controls: policies, limits, and circuit breakers

Production agents must operate under operational constraints. Explicitly encode runtime policies: quota limits, cost budgets, concurrency caps, and real-time safety checks. These controls should be enforced outside the core reasoning loop so they cannot be bypassed by a clever plan. In other words, separate “what to do” (the plan) from “whether to do it” (the policy enforcement layer).

Circuit breakers and throttles are simple but effective. A circuit breaker can open when an upstream service has elevated error rates, forcing the agent to shift to a degraded mode (notify humans, stash the plan, or run a read-only analysis). Throttling helps control cost and latency: agents that invoke downstream ML models or third-party APIs should be able to back off based on budget signals.

Evidence: one cloud-ops team added a cost-aware throttle and saw API spend from automated remediation drop 22% without losing remediation coverage. Another team reduced the frequency of runaway reconcilers by instituting a simple retry budget per incident, turning unpredictable spikes into bounded queues.

Observe, record, and enable deterministic replay

Agents make decisions; production teams must be able to replay those decisions to understand what happened and why. Instrument every decision point: inputs, intermediate reasoning states, deterministic plan fragments, and final execution calls. Store those artifacts in a compact, structured format so you can reconstruct a run without storing raw model outputs or full logs.

Deterministic replay is not about believing the agent is always right; it’s about making debugging tractable. Keep a canonical “run record” that contains the request, the validated plan, policy evaluations, and the execution log with timestamps. Add a replay mode that replays the plan in a sandboxed environment (dry-run) and verifies that the same sequence of validations and guardrails triggers the same outcomes.

Practical wins from this pattern include faster incident postmortems and safer rollbacks. Teams that implemented structured run records cut postmortem analysis time in half and were able to add unit-like regression tests that reproduce prior failures.

- Make capabilities small and well-typed; enforce idempotency and dry-run support.

- Separate planning from policy enforcement; implement quota, cost, and safety checks externally.

- Add circuit breakers and retry budgets to bound failure modes.

- Record structured run artifacts that enable deterministic replay and automated tests.

- Design interaction models that prefer human escalation over irreversible actions in ambiguous situations.

- Use canaries and progressive rollouts for new capabilities; monitor business SLOs as well as technical metrics.